Nvidia’s Robotic Pen-Twirling Mastery: Empowered by Generative AI Advancements

Nvidia has delved into the realm of robotics, marrying the capabilities of generative AI with reinforcement learning to enhance robots’ fine motor skills. While generative AI, exemplified by Google’s DeepMind RT-2, adeptly handles high-level tasks, manipulating a robot’s joints for intricate control remains beyond its scope. However, a recent breakthrough by Nvidia’s Eureka program hints at bridging this gap.

Published by lead author Yecheng Jason Ma and a team from Nvidia, the University of Pennsylvania, Caltech, and the University of Texas at Austin, the groundbreaking work leverages large language models to pave the way for robots to perform dexterous tasks, such as the manipulation of objects by their hands.

Eureka, operating within a computer-simulated robotics environment, establishes goals using language models, guiding robots at a low level. Despite its current confinement within simulated settings, Eureka’s advancements mark a pivotal step towards controlling physical robots in the real world.

While experts like Sergey Levine acknowledge the limitations of language models in addressing tasks involving physical interaction, Nvidia’s approach diverges. Rather than directly instructing robots, Eureka focuses on crafting rewards, pivotal in reinforcement learning. The team hypothesizes that language models can adeptly formulate these rewards, surpassing human-designed incentives.

Through reward “evolution,” where GPT-4 refines prompts and data about the robot’s environment, Nvidia’s team achieved significant milestones. Eureka showcased human-level performance across diverse robotic tasks, outperforming expert human rewards on a majority of them.

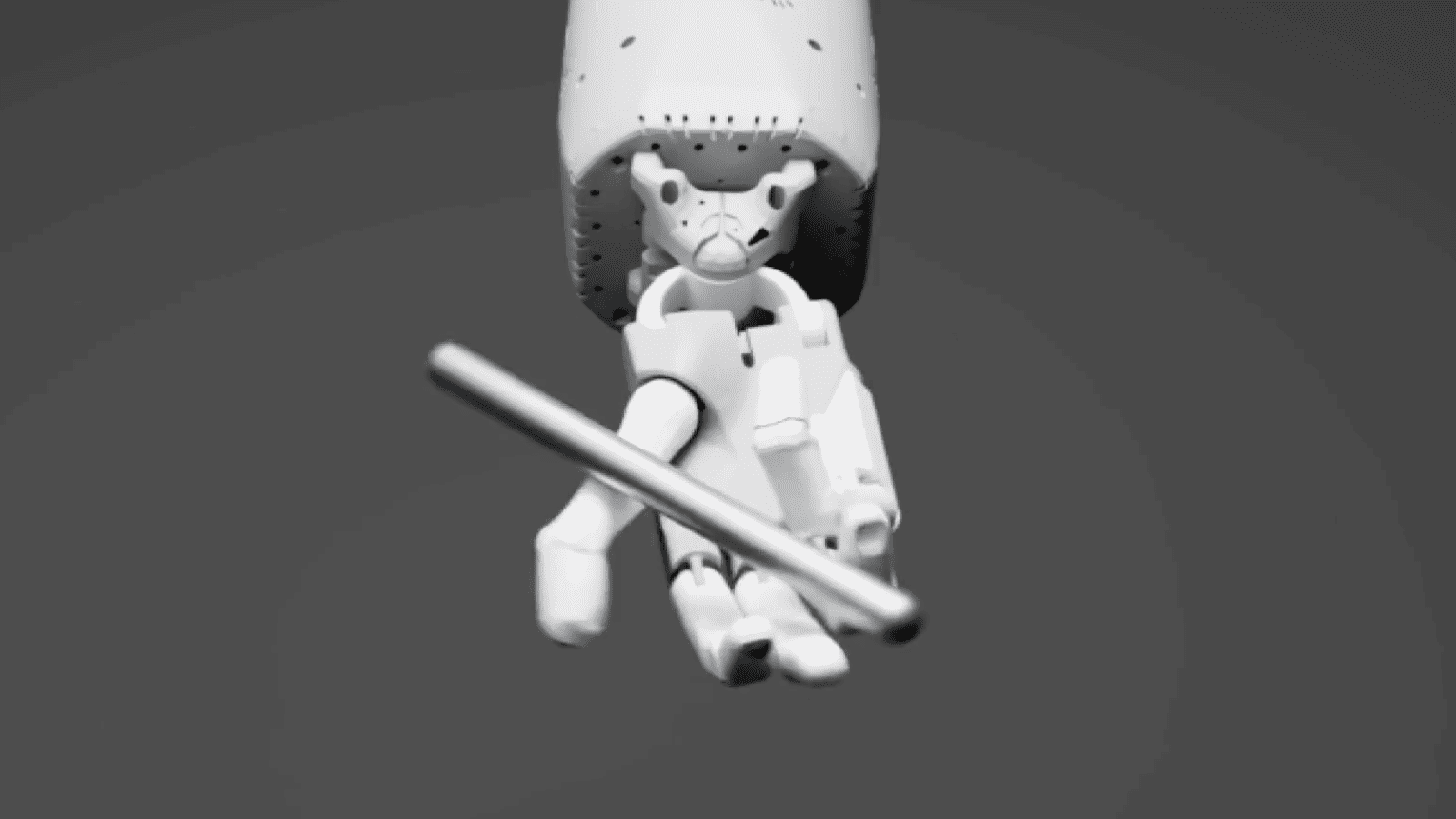

An exemplary feat includes teaching a simulated robot hand to deftly twirl a pen, an action reminiscent of a bored student in a classroom. Combining Eureka with curriculum learning, the team achieved this feat, breaking down the task into manageable segments.

Moreover, the collaboration between human and AI-designed rewards revealed a remarkable synergy, outperforming either approach in isolation. This synergy hints at the potential for a partnership between human designers and AI assistants, akin to GitHub Copilot, leveraging the strengths of both parties.

Nvidia’s strides in using generative AI to refine reinforcement learning rewards signal a promising future where robots could execute intricate tasks with finesse, blending the expertise of humans with the innovative capabilities of AI.